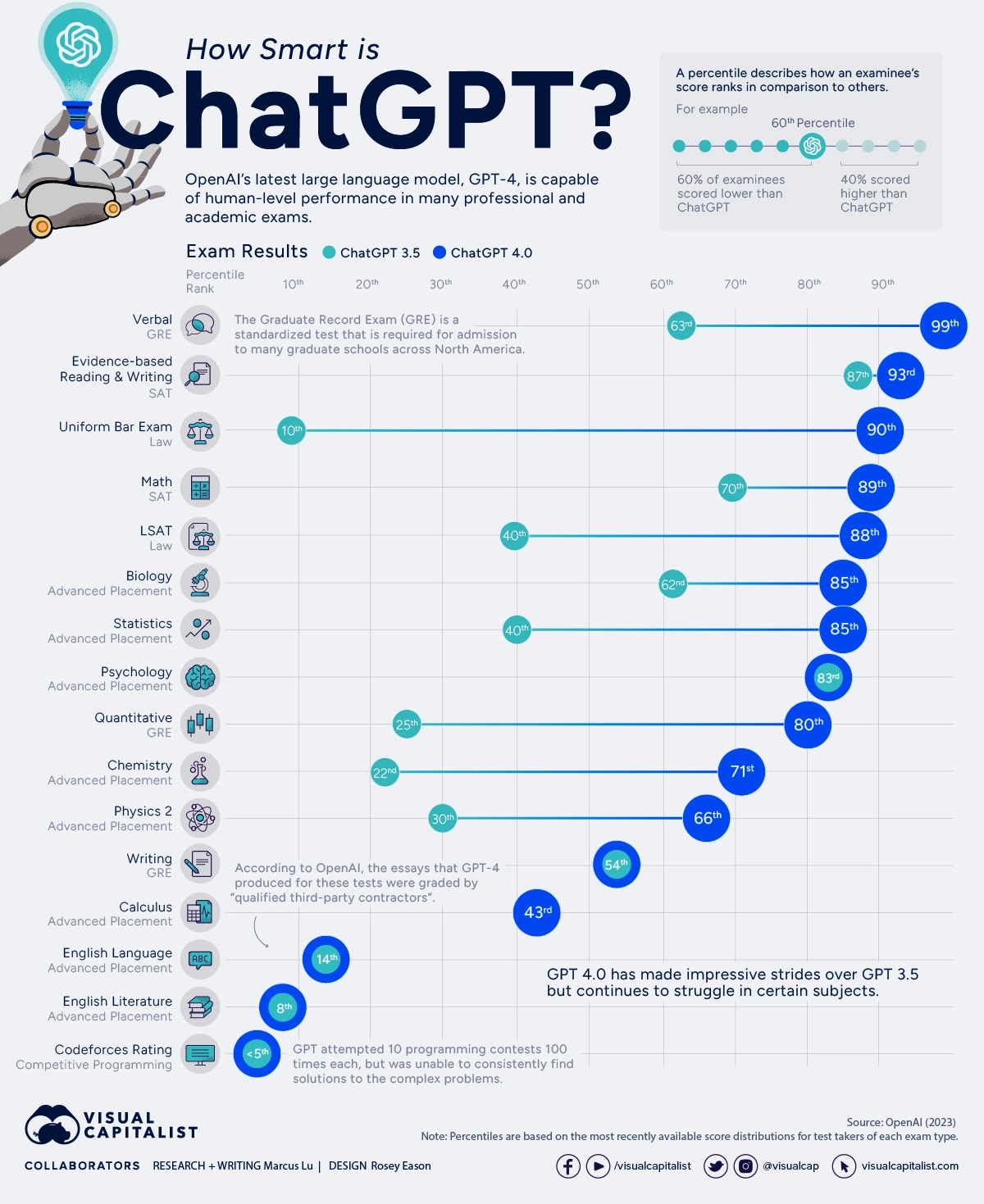

OpenAI's language model ChatGPT has been the subject of fierce debate since it first launched in November 2022, with opponents criticizing everything from its troubling responses to certain prompts, to the risk of it taking our jobs and promoting plagiarism in schools and colleges.

Before we worry too much, though, we should probably work out how smart ChatGPT actually is, right? Well, OpenAI did just that — and it turns out, it's pretty intelligent.

A technical report published by the research lab earlier this year showed the results of various professional and academic exams that OpenAI had ChatGPT, and its later model GPT-4, sit in order to demonstrate their capabilities.

Click image to enlarge

As the chart shows, GPT-4 is a lot smarter than its predecessor, displaying human-level competence in numerous exams. Neither models seem to have quite got the hang of AP English or competitive programming just yet, though.

One test in which GPT-4 particularly excelled was the uniform bar exam, placing in the 90th percentile, while ChatGPT only scored higher than 10 percent of test-takers.

Although GPT-4 performed very well in several exams, there's still room for improvement in a handful of areas — so you can probably relax about the robot rising for a little while longer.

Via Visual Capitalist.