Prime Intellect agents improve nanoGPT record to 2,930 steps

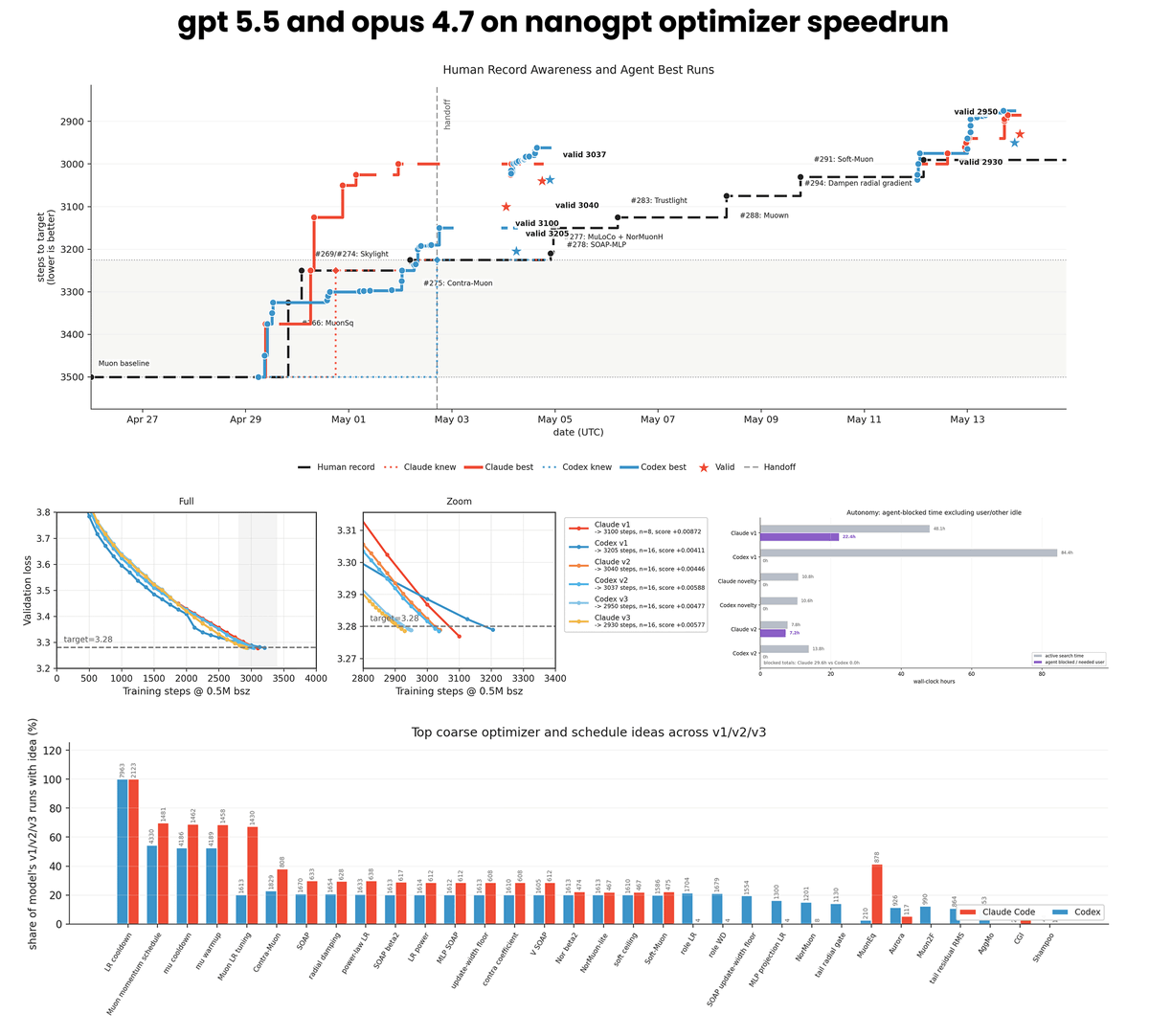

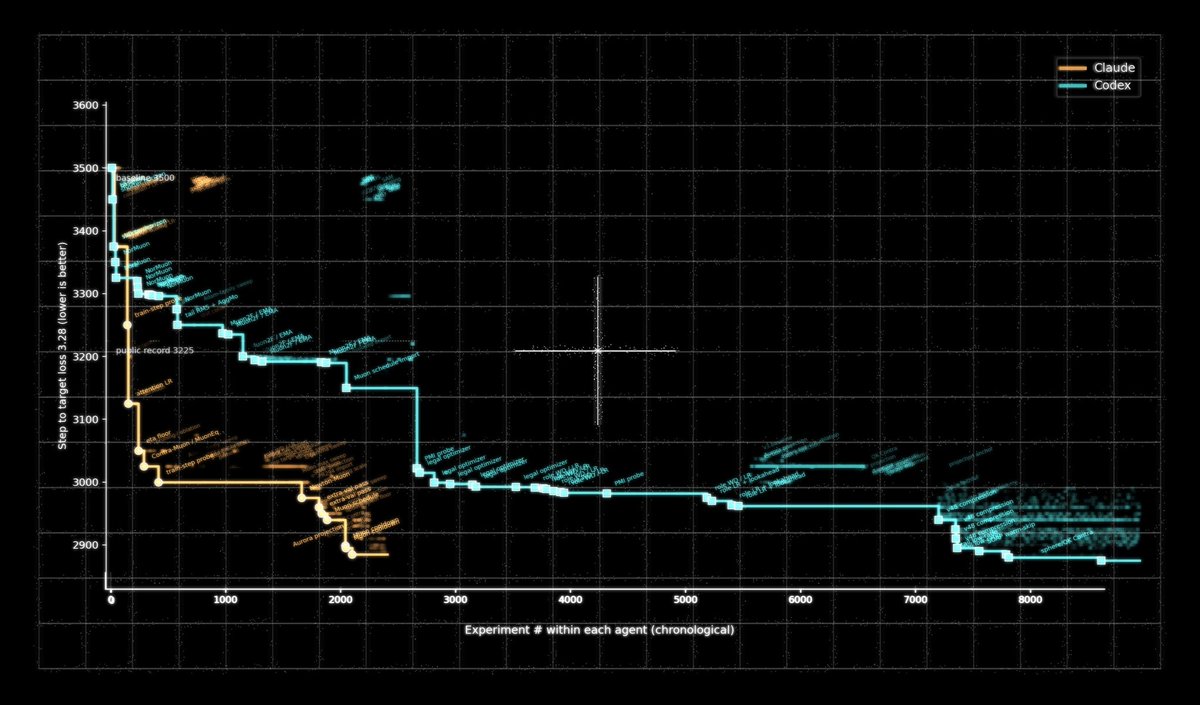

Prime Intellect ran 10,000 autonomous experiments with Claude Code Opus 4.7 and Codex GPT 5.5 agents on the nanoGPT optimizer track. Over two weeks the agents consumed 14,000 H200 GPU hours and delivered a record of 2,930 steps for the 124M-parameter model, beating the prior human baseline of 2,990 steps. The company released all run logs, scripts, configurations, and a report on GitHub.

if you weren’t aware, it’s prime intellect season

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

We started automating AI research on nanogpt-speedruns & achieved new records

>for 2 weeks GPT 5.5 and Opus 4.7 iterated on novel optimizations >10k runs & 14k H200 hours >both agents beat the human baseline >Opus now holds the record at 2930 steps

Awesome work @eliebakouch!

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

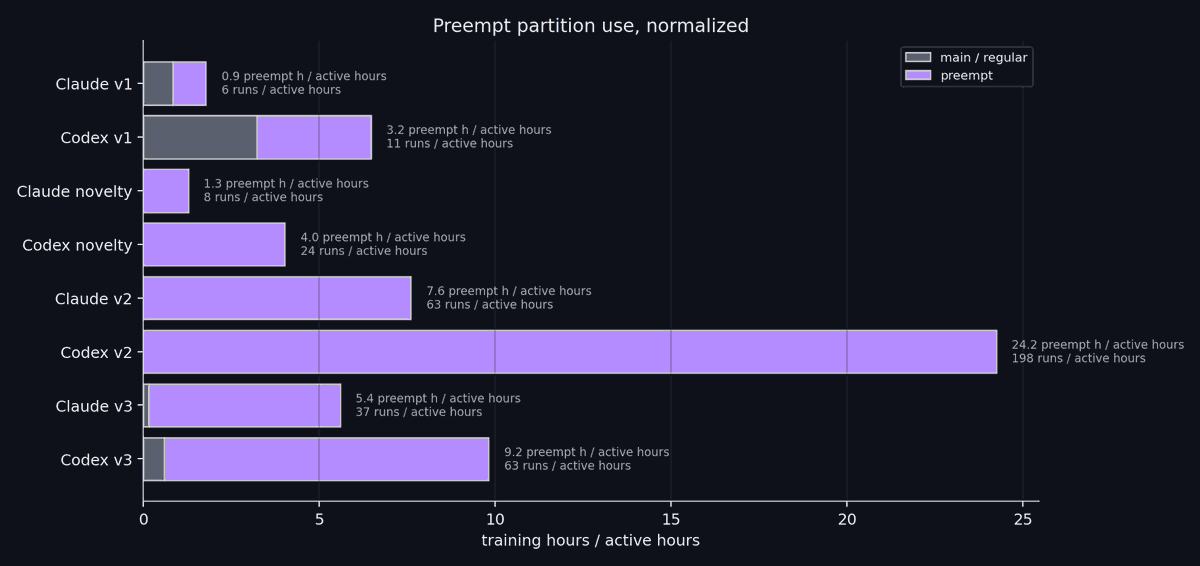

@scaling01 i agree, for transparency adding this important data point that claude stoped working a lot (which is bad) but when restarted it actually got access to new record faster than codex (which is good for claude progress ironically)

we let opus 4.7 and gpt 5.5 run on the nanogpt optimizer speedrun: ~10k runs, 14k H200 hours, 23.9B tokens. opus hits 2930, codex 2950, both beating the human baseline of 2990. we cover claude autonomy failures, codex high compute usage, and much more https://www.primeintellect.ai/auto-nanogpt

@scaling01 but in terms of efficiency it's clear

@scaling01 i agree, for transparency adding this important data point that claude stoped working a lot (which is bad) but when restarted it actually got access to new record faster than codex (which is good for claude progress ironically)

@scaling01 (even without the updated record claude was already above)

@scaling01 i agree, for transparency adding this important data point that claude stoped working a lot (which is bad) but when restarted it actually got access to new record faster than codex (which is good for claude progress ironically)

@jiaxinwen22 this is also due to the fact that claude stopped working a lot more than codex and got more exposure to the latest human records each time we restarted it, but the efficiency is very nice

The hill-climbing efficiency gap between Opus and Codex is much larger than I was expecting!

we let opus 4.7 and gpt 5.5 run on the nanogpt optimizer speedrun: ~10k runs, 14k H200 hours, 23.9B tokens. opus hits 2930, codex 2950, both beating the human baseline of 2990. we cover claude autonomy failures, codex high compute usage, and much more

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

all the records are heavily based on work from previous contributors PRs (we do explore novel ideas in a dedicated "novelty" track, but none of them ended up improving the record).

So it only made sense to let the agents write a little thank you to the community themselves

we let opus 4.7 and gpt 5.5 run on the nanogpt optimizer speedrun: ~10k runs, 14k H200 hours, 23.9B tokens. opus hits 2930, codex 2950, both beating the human baseline of 2990. we cover claude autonomy failures, codex high compute usage, and much more https://www.primeintellect.ai/auto-nanogpt

we tried to document as much as possible about how the agents behave: autonomy patterns, scratchpad memory access, subagent spawns, compute usage, research quality, how ideas flow from source to experiment, ect... all scratchpads (the agents internal memory), ~10k run logs, and scripts are here 🫡http://github.com/PrimeIntellect-ai/experiments-autonomous-speedrunning

all the records are heavily based on work from previous contributors PRs (we do explore novel ideas in a dedicated "novelty" track, but none of them ended up improving the record). So it only made sense to let the agents write a little thank you to the community themselves https://github.com/KellerJordan/modded-nanogpt/pull/300

lots of things can be improved btw, this is a lower bound of what's possible and we already have a lot more cooking. for instance if you take the harness (markdown files) of v1, it was almost entirely written by claude since it was just a yolo idea i had after seeing @kellerjordan0's tweet announcing the speedrun. we did a few iterations on it to make it better for v2/v3

we also got a surprise a few hours before releasing when we discovered that claude was not actually doing statistical verification with different seeds but instead argued that since it's different hardware it's already random (which is not totally wrong but not the expected behavior and made our record worse by 10 steps)

we tried to document as much as possible about how the agents behave: autonomy patterns, scratchpad memory access, subagent spawns, compute usage, research quality, how ideas flow from source to experiment, ect... all scratchpads (the agents internal memory), ~10k run logs, and scripts are here 🫡http://github.com/PrimeIntellect-ai/experiments-autonomous-speedrunning

we let opus 4.7 and gpt 5.5 run on the nanogpt optimizer speedrun: ~10k runs, 14k H200 hours, 23.9B tokens. opus hits 2930, codex 2950, both beating the human baseline of 2990. we cover claude autonomy failures, codex high compute usage, and much more https://www.primeintellect.ai/auto-nanogpt

@damekdavis yeah it was quite impressive, an important data point tho is that claude stopped working a lot and when we restarted it, it got updated knowledge of the different runs, but even without that it's above codex curve

Of course running this on idle is the nice part. This is more a comment on 4.7 and 5.5 tuning abilities. (I have run similar experiments my self)

@andrey_kurenkov >claim about automating a bounded, narrow surface area >"but it's not the full surface area tho"

Can we all agree that LLM-powered hyper param search to optimize nanoGPT better is not really AI research?

@andrey_kurenkov i do worry that people default to turning their brain off and going skeptic mode when they hear the category claim bc of orgs that have been... somewhat dubious with how liberally & dramatically they have narrativized adjacent work, fake 100x cuda speedups, etc etc

@andrey_kurenkov >claim about automating a bounded, narrow surface area >"but it's not the full surface area tho"

not surprised. tapping the sign again.

brutal Claude mog

to be honest, i don't think it's a bad thing to get some differenciation. codex can't really get highly reliable codebase management with unbounded search and vice versa.

not surprised. tapping the sign again.

PS since this is getting some heat: I think what @PrimeIntellect did here is actually really cool! Full write up is interesting.

I just think we need to be careful about claiming improvements on nanoGPT speedrun optimizer would correspond to truly better full on AI research.

Can we all agree that LLM-powered hyper param search to optimize nanoGPT better is not really AI research?

Can we all agree that LLM-powered hyper param search to optimize nanoGPT better is not really AI research?

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

To be clear, I'm not saying this sort of hill climbing / auto-optimization is not valuable! I'm just saying calling it 'AI research' is wrong, just call it what it is ('training optimization' or something).

Can we all agree that LLM-powered hyper param search to optimize nanoGPT better is not really AI research?

now imagine how brutal the mog is with Mythos

this is a slight update against OpenAI pulling ahead this year through faster model cycle times

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

brutal Claude mog

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

.@eliebakouch let the agents go wild on our idle compute to compete in the nanoGPT speedrun optimizer track!

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

@eliebakouch blog post with all the details:

.@eliebakouch let the agents go wild on our idle compute to compete in the nanoGPT speedrun optimizer track!

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

.@eliebakouch cooked

Interesting to consider gain relative to cost.

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

Of course running this on idle is the nice part. This is more a comment on 4.7 and 5.5 tuning abilities. (I have run similar experiments my self)

Interesting to consider gain relative to cost.

and so it begins

we let opus 4.7 and gpt 5.5 run on the nanogpt optimizer speedrun: ~10k runs, 14k H200 hours, 23.9B tokens. opus hits 2930, codex 2950, both beating the human baseline of 2990. we cover claude autonomy failures, codex high compute usage, and much more https://www.primeintellect.ai/auto-nanogpt

The hill-climbing efficiency between Opus and Codex is much larger than I was expecting!

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

The hill-climbing efficiency gap between Opus and Codex is much larger than I was expecting!

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

@andrey_kurenkov Yes. But it's fun!

Can we all agree that LLM-powered hyper param search to optimize nanoGPT better is not really AI research?