Waterloo researchers replicate DPR model in Pyserini

Researchers Xueguang Ma, Rodrigo Pradeep and University of Waterloo undergraduate Kai Sun replicated the 2020 Dense Passage Retrieval model from Karpukhin et al. Their April 2021 arXiv study integrated BM25 sparse retrieval with dense neural retrievers inside the Pyserini toolkit. The resulting hybrid methods appear in Pyserini and Pi-Serini, with evaluations on BEIR, LongEmbed and BrowseComp-Plus benchmarks. Recent extensions pair the retriever with search agents.

@lintool cheeky not to count index as its embeddings

Since we're counting model parameters, let me introduce you to a two-parameter model for agentic search that's awesome: It's called BM25. I haven't tried it yet, but I think fp4 will work fine. https://arxiv.org/abs/2605.10848

The DPR paper might have shown you wouldn't need BM25, but that neural models were insufficient was known before the popularization of hybrid search. In particular, in our SIGIR paper (that slightly preceded the BEIR paper by @beirmug and colleagues) we learned that strong neural rankers don't generalize well and underpform BM25. @beirmag paper makes some additional findings, in particular, that the dense retrieval generalizes more poorly compared to the BERT-based rankers. The @UWaterloo team surely deserves the credit for the ultimate demonstration of the usefulness of the hybrid search. However, the need for a hybrid search was also motivated by prior work. https://arxiv.org/abs/2103.03335

Thus, our conclusions: This I believe is the first demonstration of the need for hybrid search. Hence the claim that hybrid search is a @UWaterloo innovation. You're welcome! The broader lesson is that old baselines are still surprisingly important. Let's not forget them.

@mrdrozdov @xueguang_ma @mat_jacob1002 I think in that case one should blame the ranker, not a hybrid search. With a better ranker, hybrid search + ranker should have outperformed just vector search.

BTW, there's truly unique (to my knowledge) @UWaterloo invention that has become a largely essential cog in of the hybrid retrieval machine. Yet nobody is talking about it. It is a reciprocal rank fusion published by G. Cormack, @claclarke , and Stefan Büttcher. AFAIK, it is implemented and used nearly everywhere.

Thus, our conclusions: This I believe is the first demonstration of the need for hybrid search. Hence the claim that hybrid search is a @UWaterloo innovation. You're welcome! The broader lesson is that old baselines are still surprisingly important. Let's not forget them.

I think @xueguang_ma is being too modest, so I'll provide context: he along with @rpradeep42 and a UWaterloo ugrad (Kai Sun) popularized hybrid search in its current form.

So, if you're using hybrid search today, thank them. 🙏

Yes, this is clickbait-y, so I'll support my claims 🧵

This plot reminds me of my first IR work reproducing DPR in Pyserini, where we found BM25 is amazingly helpful when hybrid with a dense retriever. BM25 is never just a simple baseline -- used the right way, it can easily outperform many fancy methods. BM25 was the most robust method shown in BEIR, the most effective and efficient method for long-context search shown in LongEmbed, and now @mattjustram and @xuzihuan4 show that BM25 can push the search agents into the best efficiency frontier. p.s. Pyserini and pi-serini are two different repos.

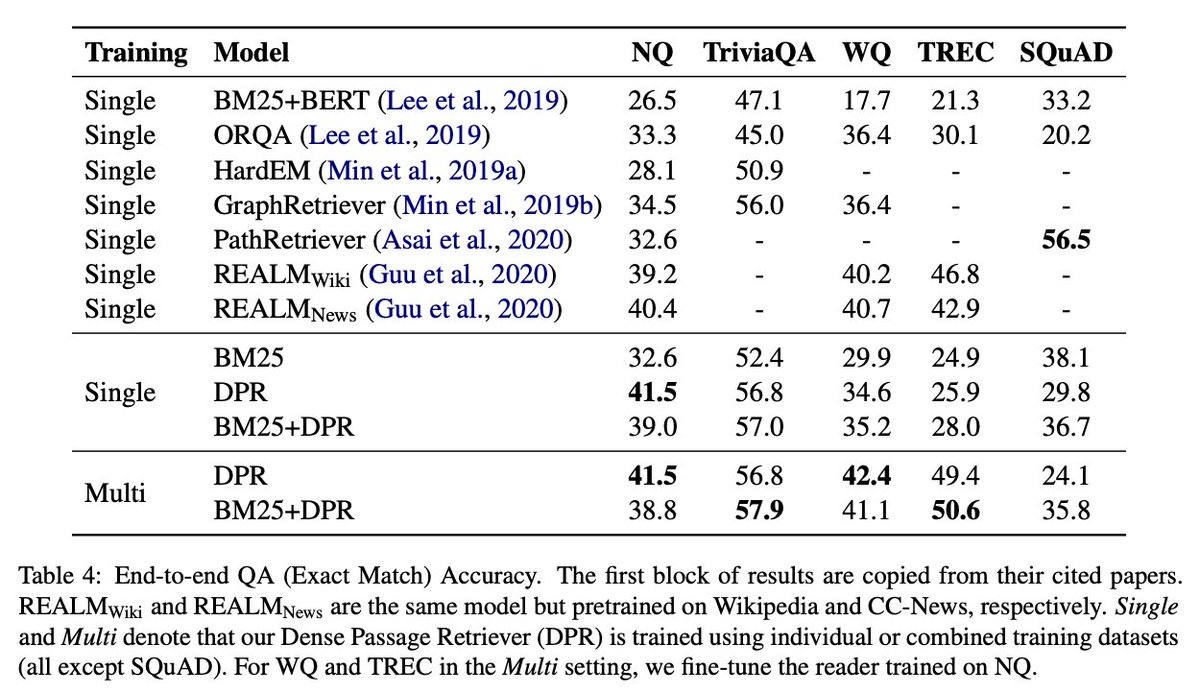

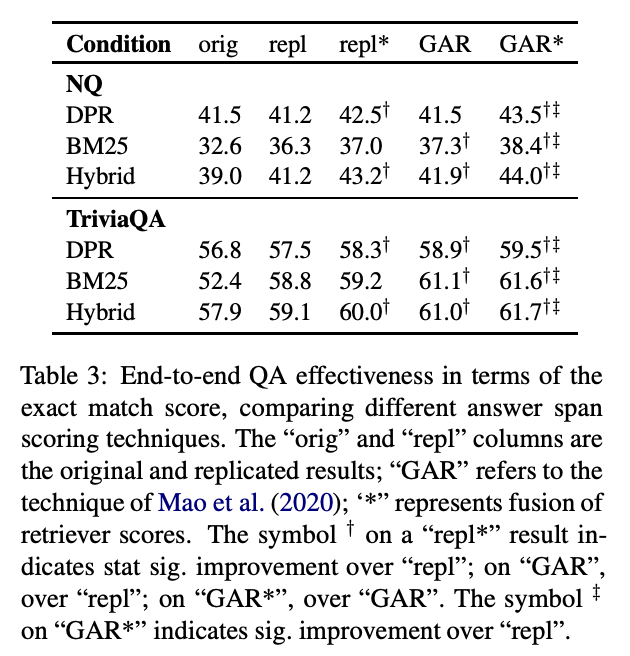

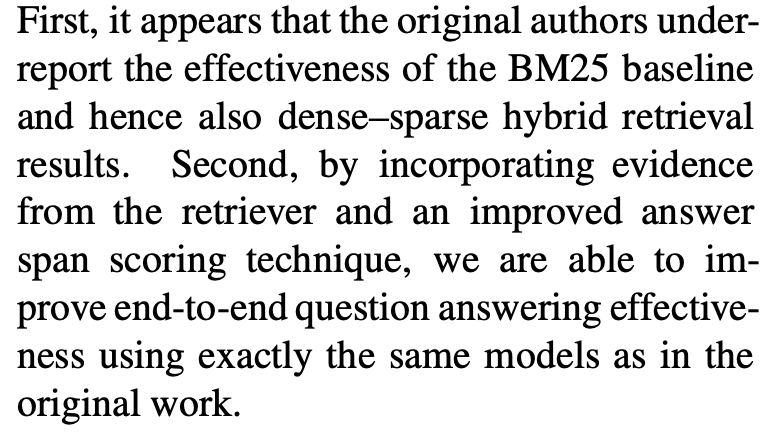

The original DPR paper https://aclanthology.org/2020.emnlp-main.550/ claimed that with dense retrieval, you no longer needed BM25.

I think @xueguang_ma is being too modest, so I'll provide context: he along with @rpradeep42 and a UWaterloo ugrad (Kai Sun) popularized hybrid search in its current form. So, if you're using hybrid search today, thank them. 🙏 Yes, this is clickbait-y, so I'll support my claims 🧵

But that's not what we found: even with DPR, a dense-sparse hybrid with BM25 is significantly better than DPR alone. https://arxiv.org/abs/2104.05740

The original DPR paper https://aclanthology.org/2020.emnlp-main.550/ claimed that with dense retrieval, you no longer needed BM25.

Thus, our conclusions: This I believe is the first demonstration of the need for hybrid search. Hence the claim that hybrid search is a @UWaterloo innovation. You're welcome!

The broader lesson is that old baselines are still surprisingly important. Let's not forget them.

But that's not what we found: even with DPR, a dense-sparse hybrid with BM25 is significantly better than DPR alone. https://arxiv.org/abs/2104.05740

Since we're counting model parameters, let me introduce you to a two-parameter model for agentic search that's awesome: It's called BM25. I haven't tried it yet, but I think fp4 will work fine. https://arxiv.org/abs/2605.10848

But I think we can do better... what about zero parameters? Let me introduce you to something else that's awesome: It's called grep. https://arxiv.org/abs/2605.05242

Since we're counting model parameters, let me introduce you to a two-parameter model for agentic search that's awesome: It's called BM25. I haven't tried it yet, but I think fp4 will work fine. https://arxiv.org/abs/2605.10848

@srchvrs @xueguang_ma @mat_jacob1002 Indeed. No silver bullet. :)

@mrdrozdov @xueguang_ma @mat_jacob1002 I think in that case one should blame the ranker, not a hybrid search. With a better ranker, hybrid search + ranker should have outperformed just vector search.