ZyphraAI converts ZAYA1-8B-base model to diffusion LLM

ZyphraAI converted its ZAYA1-8B-base autoregressive model to a diffusion LLM through mid-training rather than training from scratch. The company applied a TiDAR-based diffusion-conversion step followed by diffusion supervised fine-tuning on its existing stack. The resulting model diffuses 16-token blocks in a single step from a mask prior, matches autoregressive logits via speculative decoding, and mixes logits during sampling to deliver speedups over earlier methods such as TiDAR. Platform discussion noted diffusion language models increasingly adopting autoregressive traits on smaller sequential token blocks.

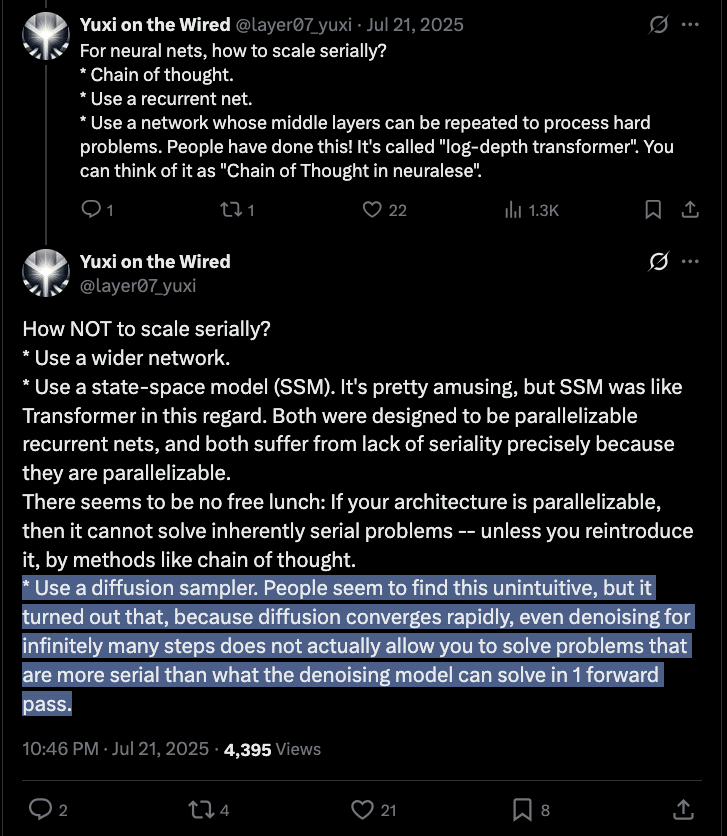

Yuxi keeps winning

That's a cool way to explain the logistical salience of the trick, but let's be honest, such "diffusion endpoint" is just the logical endpoint of speculative decoding for AR. Pure diffusion language models had another… more confused promise.

@teortaxesTex >ask the performant dlm author if its seqlevel denoising or block-causal >"its a good diffusion language model, sir" >look inside >its block-causal

Yuxi keeps winning

Cool.

We present ZAYA1-8B-Diffusion-Preview, the first diffusion language model trained on @AMD. Autoregressive LLMs generate one token at a time; diffusion generates a block in parallel, speeding up inference. We show a 4.6-7.7x decoding speedup with minimal quality degradation 🧵