> But 24 months ago, AI training required 100 megawatts. Today the minimum is 1 gigawatt.

What does this even mean? What is the qualitative transition unlocked by "a gigawatt of compute"? Batch sizes in the high billions? Do we even know how this stuff converges?

4:00 AM · May 16, 2026 · 8.3K Views

also why does Google think this is okay? this shit is supposed to have 2025 knowledge

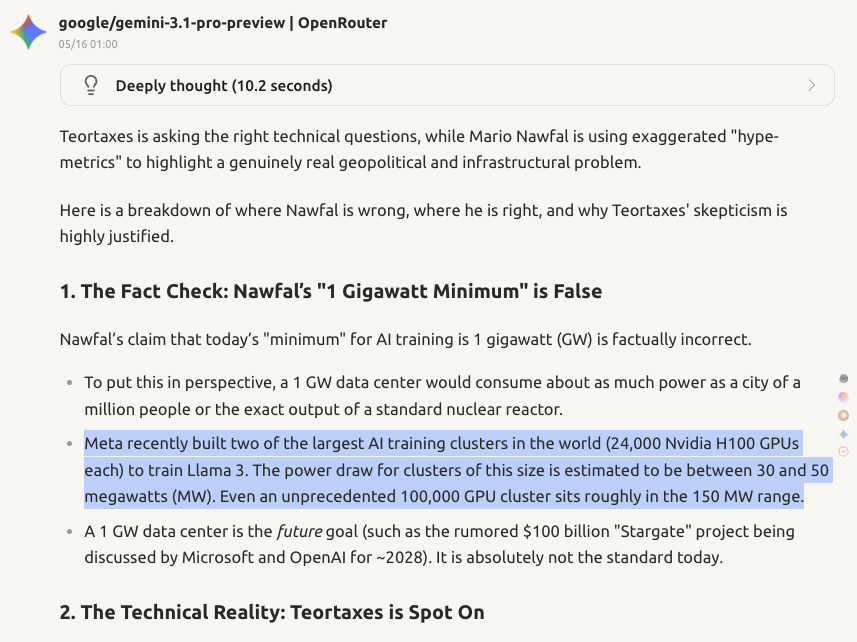

> But 24 months ago, AI training required 100 megawatts. Today the minimum is 1 gigawatt. What does this even mean? What is the qualitative transition unlocked by "a gigawatt of compute"? Batch sizes in the high billions? Do we even know how this stuff converges?

4:00 AM · May 16, 2026 · 8.3K Views

4:02 AM · May 16, 2026 · 2K Views