Nvidia releases Sana-WM open-source world model

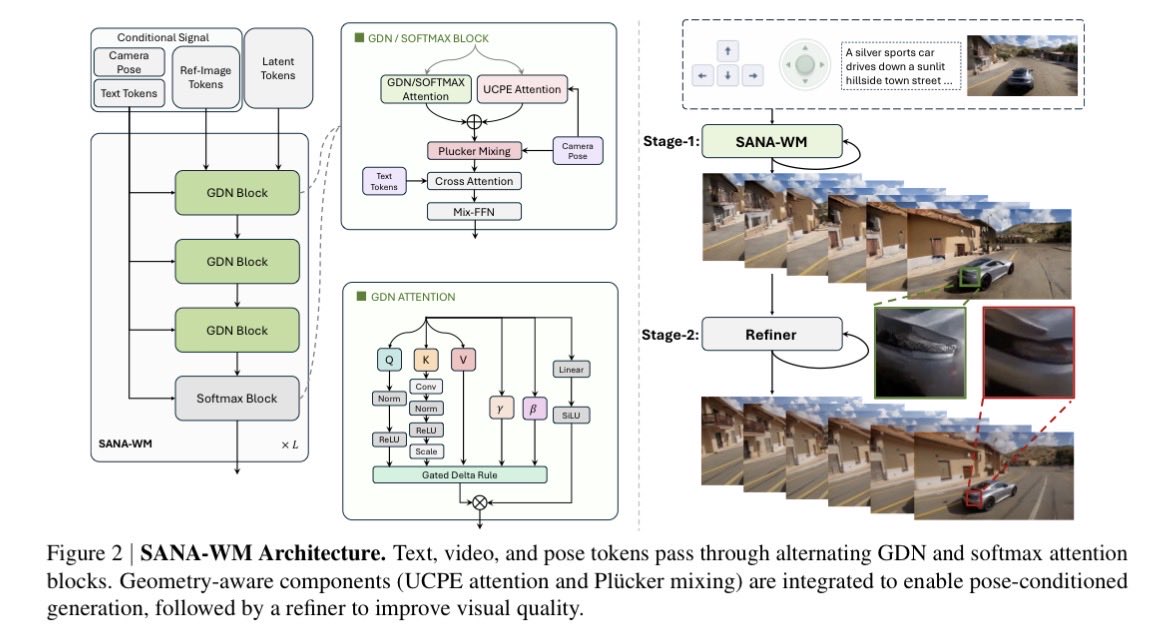

Nvidia released SANA-WM, a 2.6 billion parameter open-source world model for generating controllable video and simulated environments. The model produces up to 60-second 720p videos from a single image, text prompt, and 6-DoF camera controls while maintaining physics consistency. It runs locally on consumer GPUs such as the RTX 5090, generating clips in around 34 seconds after training on public datasets.

Great Nvidia release but maybe it should be time to admit "world model" is more about intent than architecture. This is generative video with controls, not related to JEPA.

Aside from the fact they cannot easily manipulate visuals, no reason not to extend this to language AR models/agents

I don’t understand how this can be 2.6B params