Engineers trace nanoGPT speedrun spikes to 2015 Marathi blog

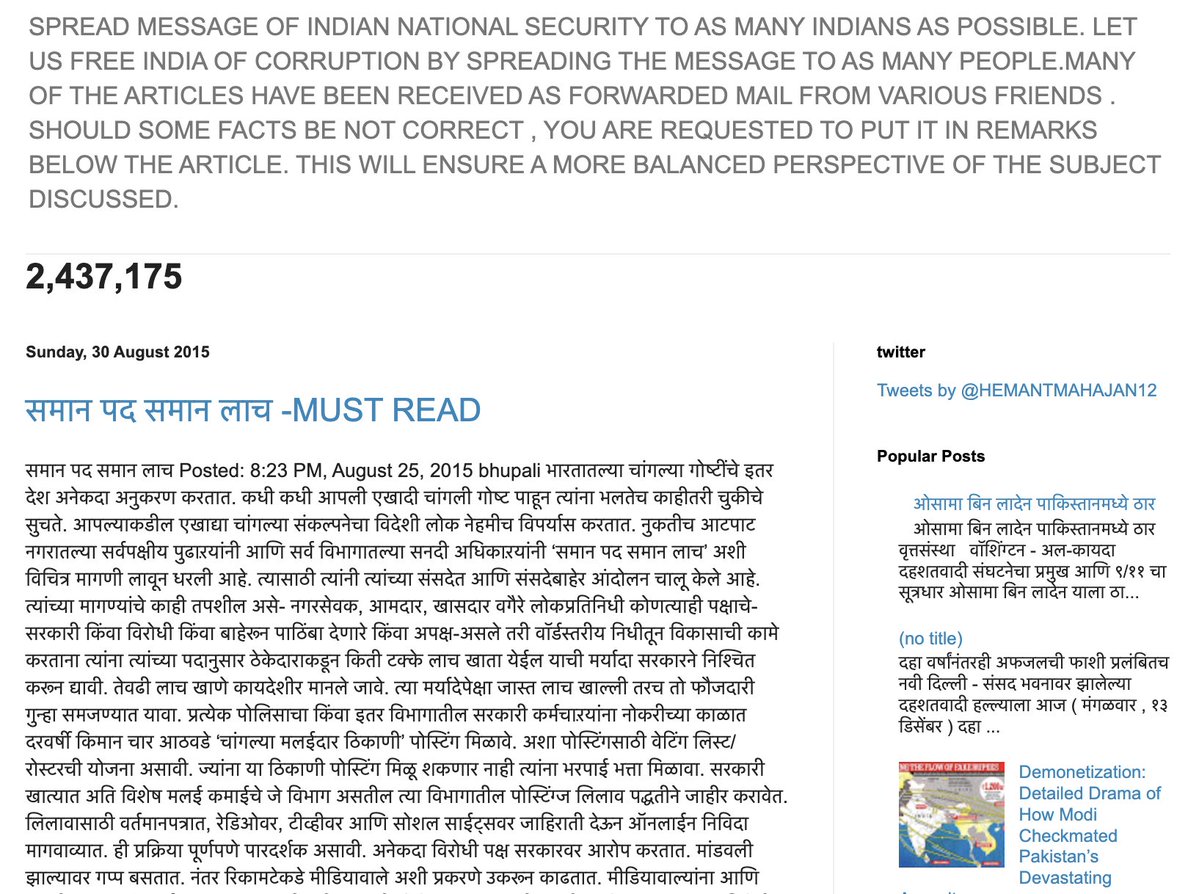

Research engineers tracking nanoGPT training runs identified a 2015 Marathi-language blog post dated 30 August as the source of repeated speedrun spikes and optimizations. The post mixes English national-security messaging with dense Devanagari text and has evaded standard filters, allowing undetected circulation in AI datasets. Twitter users linked the anomalies directly to this single source.

ok i'm starting to suspect many nanogpt speedrun spikes/anomalies (and maybe even minute optimization) can be tracked to this one marathi blog that somehow evade the English filter.

Effect is not as striking but seems like I can attribute most spikes to very long docs with excessive topic focus: transcript meeting, academic blog and… a macgyver fanfic (?)

ok i'm starting to suspect many nanogpt speedrun spikes/anomalies (and maybe even minute optimization) can be tracked to this one marathi blog that somehow evade the English filter.

loooool

ok i'm starting to suspect many nanogpt speedrun spikes/anomalies (and maybe even minute optimization) can be tracked to this one marathi blog that somehow evade the English filter.