Pranav Shyam calls for moratorium on new optimizers

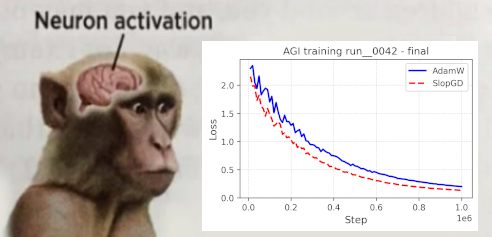

Pranav Shyam called for a moratorium on new optimizers until existing methods are better understood. The call follows Shampoo displacing Adam variants and the reparameterization of Shampoo with beta2 set to zero, now called Muon. Muon has spawned dozens of follow-on variants with only marginal gains. Rohan Anil described the pattern of incremental changes after Muon. Keller Jordan observed that a benchmark intended to curb such proliferation has not proven effective.

@zacharynado @sasuke___420 I remember this table! Though to be fair, Shampoo and KFac are in there too

the sloptimizer field is just getting started with shampoo and muon gen algorithms, the graveyard of adam variants got so bad you can't list them all on a page

We did Shampoo so we kill all the variants of Adam. Now we call Shampoo b2=0.0 as Muon, then created 10s of variants thats marginally better.

Moratorium on new optimizers until we figure out whats going on

@recurseparadox This was what my benchmark was supposed to fix. But I’m not sure it’s working

Moratorium on new optimizers until we figure out whats going on

the sloptimizer field is just getting started with shampoo and muon gen algorithms, the graveyard of adam variants got so bad you can't list them all on a page

Moratorium on new optimizers until we figure out whats going on

from @frankstefansch1's https://arxiv.org/abs/2007.01547

the sloptimizer field is just getting started with shampoo and muon gen algorithms, the graveyard of adam variants got so bad you can't list them all on a page

maybe we have been too fast to accuse auto-research. just primary male urge to optimize.

@zacharynado slopGD is crazy 😭😭

A big take away from @jeankaddour and Oscar’s “No Train No Gain” paper (https://arxiv.org/abs/2307.06440, NeurIPS 2023) is that Adam with LR decay is extremely difficult to beat, and many complex training strategies do not really buy you anything

the sloptimizer field is just getting started with shampoo and muon gen algorithms, the graveyard of adam variants got so bad you can't list them all on a page

maybe we have been too fast to accuse auto-research. just primary male urge to optimize.

the sloptimizer field is just getting started with shampoo and muon gen algorithms, the graveyard of adam variants got so bad you can't list them all on a page

5yo seeing a hole on the beach: what if we digged further. 20-30 something seeing a loss valley:

maybe we have been too fast to accuse auto-research. just primary male urge to optimize.

Moratorium on new optimizers until we figure out whats going on

@typedfemale Join my cause

Moratorium on new optimizers until we figure out whats going on