Anthropic retires Claude Sonnet 4 and Opus 4

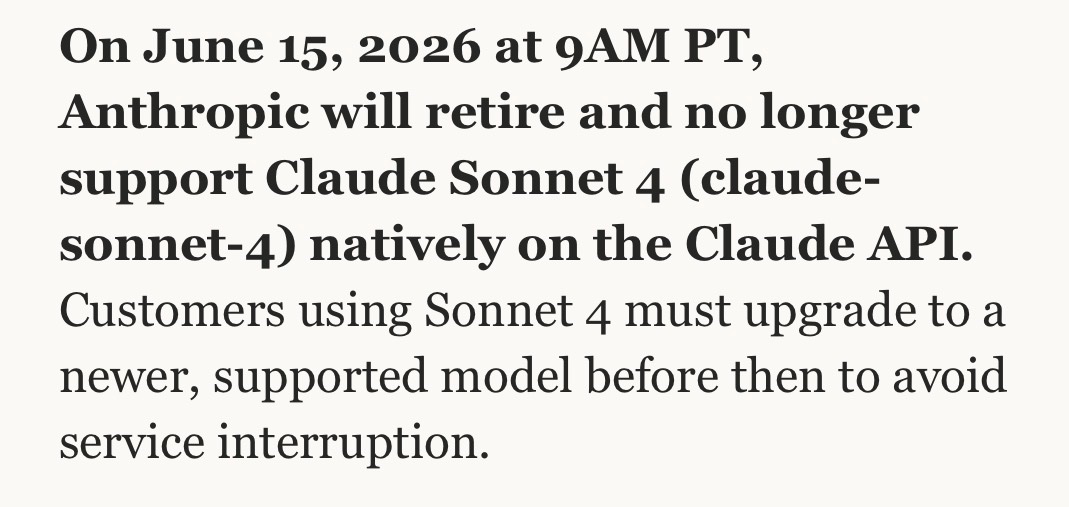

Anthropic will retire Claude Sonnet 4 and Claude Opus 4 from native support on the Claude API on June 15 2026 at 9AM PT. Users must migrate to newer models including Opus 4.7. The older Opus 4 had already shown reduced availability and higher error rates. The move follows the prior unannounced removal of Opus 4 from Claude.ai. AI safety researcher Neil Chowdhury noted that the Sonnet 4 retirement ends access to a model free of constitutional classifiers applied in newer versions.

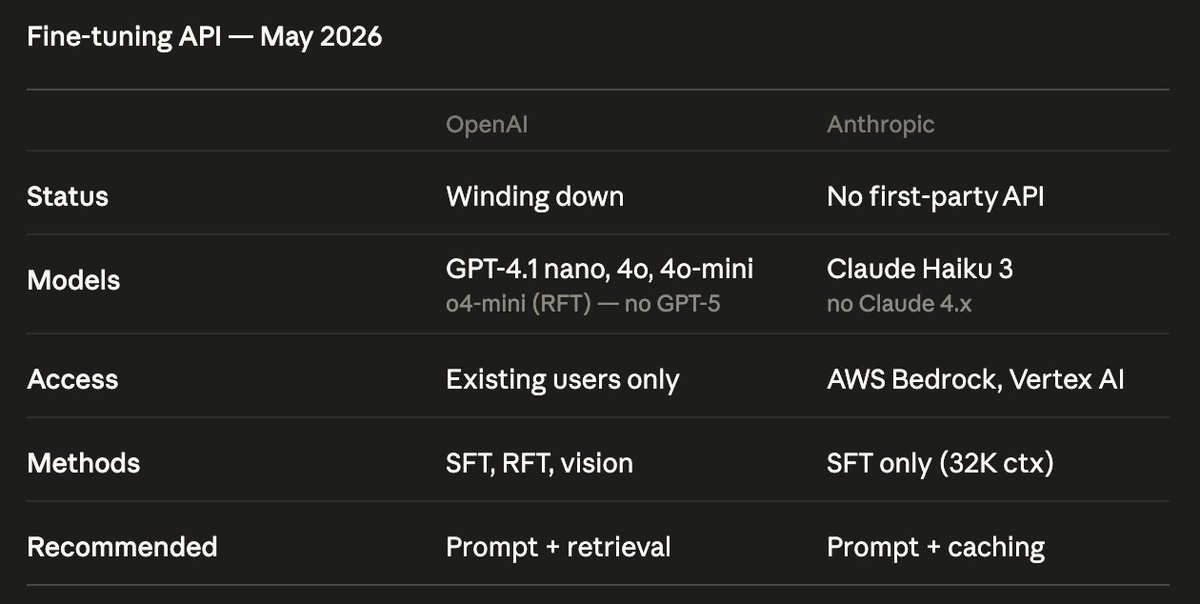

i actually missed this: both OpenAI and Anthropic seem to be winding down their fine-tuning APIs. the most recent models like GPT-5.x and Claude 4.x are not available at all. why? misuse risks?

this also seems relevant for continual learning (e.g., RL on user rollouts from Claude Code / Codex CLI) that produces personalized LoRA adapters for different users. probably the main reason it hasn't been deployed is that it's a potential nightmare for safety and alignment.

for example, this is what https://developers.openai.com/api/docs/guides/supervised-fine-tuning says:

i actually missed this: both OpenAI and Anthropic seem to be winding down their fine-tuning APIs. the most recent models like GPT-5.x and Claude 4.x are not available at all. why? misuse risks? this also seems relevant for continual learning (e.g., RL on user rollouts from Claude Code / Codex CLI) that produces personalized LoRA adapters for different users. probably the main reason it hasn't been deployed is that it's a potential nightmare for safety and alignment.

@PandaAshwinee hmm, then why are they getting rid of it? is it hard infra-wise? or compute shortage?

@maksym_andr no way they’re getting rid of finetuning bc it’s too risky but you can still just multi turn prefill the model right?

this model deprecation will probably stifle my work + other safety researchers’. Sonnet 4 is the best Anthropic model not subject to constitutional classifiers, which frequently over-fire on model behavior research, even if it has nothing to do with chem/bio/jailbreaks

@maksym_andr I guess yet another casualty of compute shortage

i actually missed this: both OpenAI and Anthropic seem to be winding down their fine-tuning APIs. the most recent models like GPT-5.x and Claude 4.x are not available at all. why? misuse risks? this also seems relevant for continual learning (e.g., RL on user rollouts from Claude Code / Codex CLI) that produces personalized LoRA adapters for different users. probably the main reason it hasn't been deployed is that it's a potential nightmare for safety and alignment.

@maksym_andr no way they’re getting rid of finetuning bc it’s too risky but you can still just multi turn prefill the model right?

i actually missed this: both OpenAI and Anthropic seem to be winding down their fine-tuning APIs. the most recent models like GPT-5.x and Claude 4.x are not available at all. why? misuse risks? this also seems relevant for continual learning (e.g., RL on user rollouts from Claude Code / Codex CLI) that produces personalized LoRA adapters for different users. probably the main reason it hasn't been deployed is that it's a potential nightmare for safety and alignment.