John Carmack shares PyTorch squared distance code

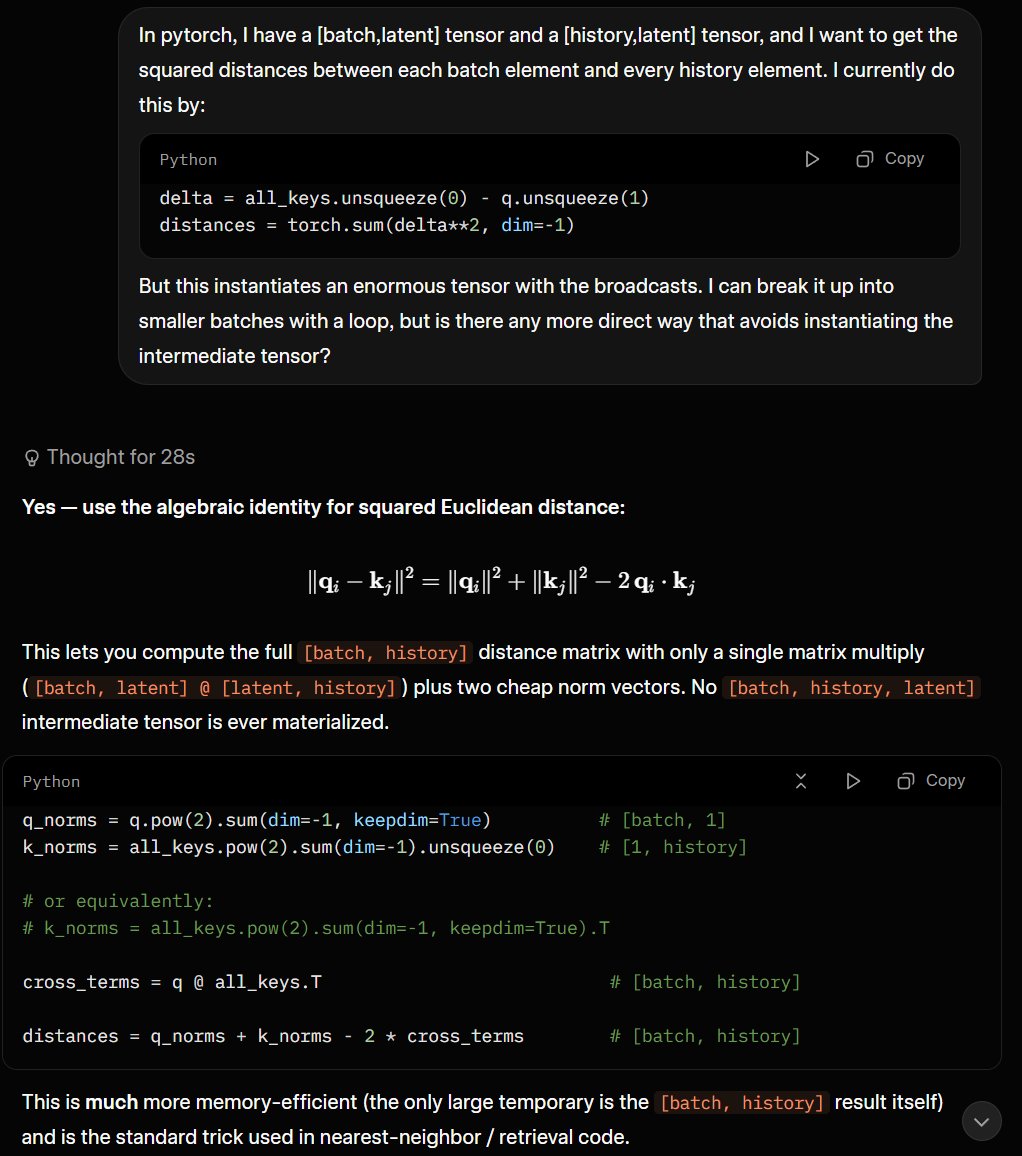

John Carmack shared a PyTorch implementation for computing squared Euclidean distances between batch embeddings of shape [batch, latent] and history embeddings of shape [history, latent]. The method uses precomputed vector norms via pow(2).sum and a matrix multiply for cross terms, avoiding large intermediate tensors. Researchers Andreas Kirsch and Lucas Beyer replied, noting its value for k-nearest-neighbor lookups and equivalence to normalized dot-product similarity. Carmack leads AGI research at Keen Technologies.

@ID_AA_Carmack yeah and if they are normalized, then dot vs square dist are equivalent, which is a pretty neat party trick.

I'm a little disappointed with myself that the high school algebra identity didn't occur to me right away.

I'm a little disappointed with myself that the high school algebra identity didn't occur to me right away.

@ID_AA_Carmack Yeah this is an important optimization for kNN lookups

I'm a little disappointed with myself that the high school algebra identity didn't occur to me right away.