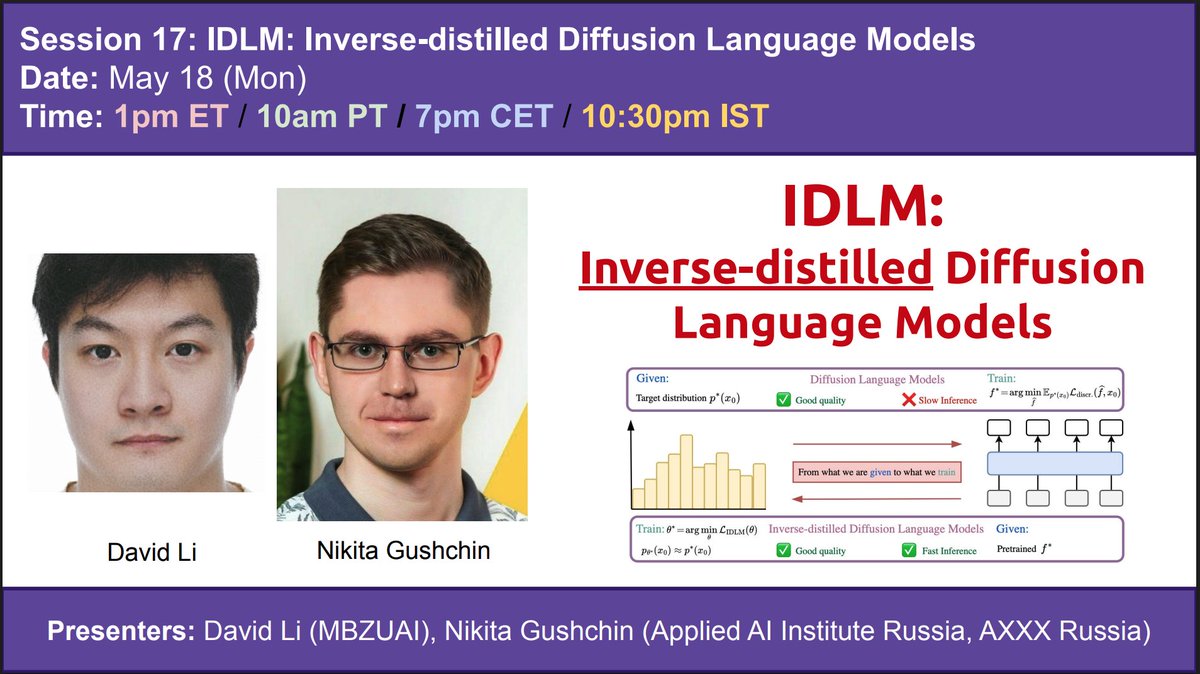

📢 May 18 (Mon): IDLM: Inverse-distilled Diffusion Language Models

🤔Diffusion Language Models (DLMs) have recently achieved strong results in text generation. However, their multi-step sampling leads to slow inference, limiting practical use.

💡To address this, the authors extend Inverse Distillation, a technique originally developed to accelerate continuous diffusion models, to the discrete setting. However, this extension introduces both theoretical and practical challenges.

🔧To overcome these challenges, the authors first provide a theoretical result demonstrating that their inverse formulation admits a unique solution, thereby ensuring valid optimization. They then introduce gradient-stable relaxations to support effective training.

📊As a result, experiments on multiple DLMs show that their method, Inverse-distilled Diffusion Language Models (IDLM), reduces the number of inference steps by 4×—64×, while preserving the teacher model’s entropy and generative perplexity.

This Monday, David Li (https://scholar.google.com/citations?user=L88Qc4YAAAAJ&hl=ru) and Nikita Gushchin (https://scholar.google.com/citations?user=UaRTbNoAAAAJ&hl=ru) will present their jointly led paper, which was recently accepted at ICML 2026.

Collaborators of this work include: Dmitry Abulkhanov (@dabulkhanov_), Eric Moulines (https://scholar.google.com/citations?user=_XE1LvQAAAAJ&hl=fr), Ivan Oseledets (@oseledetsivan), Maxim Panov (@maxim_panov), Alexander Korotin (https://akorotin.netlify.app/)

Paper link: https://arxiv.org/abs/2602.19066