arXiv clarifies one-year ban for unchecked LLM submissions

arXiv has clarified penalties for submissions containing unverified LLM-generated content. Papers showing hallucinated references or leftover AI meta-comments now trigger a one-year platform ban. After the ban, authors must first secure acceptance at a reputable peer-reviewed venue before resubmitting. Thomas G. Dietterich of Oregon State University outlined the updated rules in a public thread that researchers have since shared widely.

@DimitrisPapail @tdietterich @roydanroy It was always gated: need one endorsement from someone. That already protects s lot of slop back then. But now that globally incentives and "the game"[1] have changed, it makes sense to change the gating.

1: trust me i hate that it even makes sense to call it that, but it does

arXiv was never high SNR. it has had slop way before LLMs and a fake P=NP proof once a month for two decades and has always been usable. Its strength was never the average correctness of papers on it, but open access to text and artifacts, and easy way to reference work. Correctness gets established downstream by people who actually use the work

Steep penalties for submitting AI slop to the arXiv.

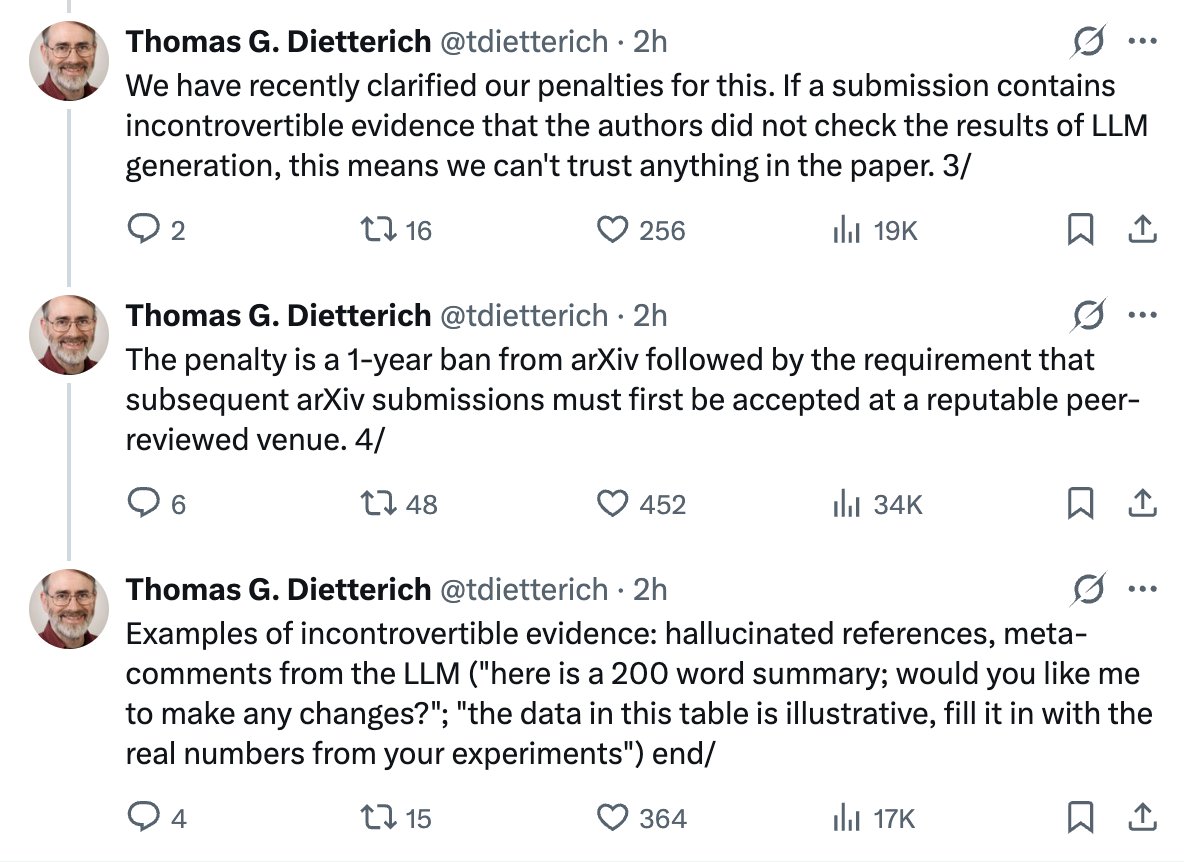

The penalty is a 1-year ban from arXiv followed by the requirement that subsequent arXiv submissions must first be accepted at a reputable peer-reviewed venue. 4/

There's a lot of controversy brewing around arXiv's decision to penalize authors who post unchecked AI generated content.

The impulse is correct, IMO, simply on grounds of efficiency: it is much cheaper to insist the authors vet their work first, rather than distributing the cost of that work to EVERY reader/agent who subsequently downloads the work.

I believe the mechanism is likely the wrong one, however. Unfortunately, suggestions to use github are even worse, IMO, because they lose the (effective) immutability of the scientific record, which arXiv upholds.

The penalty is a 1-year ban from arXiv followed by the requirement that subsequent arXiv submissions must first be accepted at a reputable peer-reviewed venue. 4/

We have recently clarified our penalties for this. If a submission contains incontrovertible evidence that the authors did not check the results of LLM generation, this means we can't trust anything in the paper. 3/

@DimitrisPapail I see your points, but I think you may also be discounting just how curated Arxiv already is. @tdietterich and others reject a ton of low-quality submissions. There are problems with the LLM proposal, but the mods want to maintain something similar to the current quality bar.

Found myself posting papers to GitHub instead of arXiv lately. No gatekeeping, is in the same repo as the code, one link for everything, and gets uploaded immediately. Makes you wonder what arXiv's actual value is.

If you AI agents assist writing your paper and don't want to go to arXiv jail, our recent work publishes a tool that mitigates bibtex citation hallucination via the agent's skill interface. You will have to add something like "use the clibib skill to discover new bibtex citations and check existing ones". Link below 👇

hallucinated references will land you a 1-year ban from arxiv now. wow

clibib is a Python package and an agent skill for fetching citations through natural language or the /clibib slash command. Works with any agent that supports the open standard — Claude Code, Codex CLI, Gemini CLI, OpenHands, GitHub Copilot, and others. https://github.com/delip/clibib

If you AI agents assist writing your paper and don't want to go to arXiv jail, our recent work publishes a tool that mitigates bibtex citation hallucination via the agent's skill interface. You will have to add something like "use the clibib skill to discover new bibtex citations and check existing ones". Link below 👇

details about the skill: https://github.com/delip/clibib/blob/main/skill/README.md

clibib is a Python package and an agent skill for fetching citations through natural language or the /clibib slash command. Works with any agent that supports the open standard — Claude Code, Codex CLI, Gemini CLI, OpenHands, GitHub Copilot, and others. https://github.com/delip/clibib

@BlancheMinerva @ChenhaoTan there's been fraudulent papers on arxiv before LLMs. The burden on what is of value falls on the community, not on arxiv. The problem with policies like that is they add more burden on the maintainers with little (if at all) benefit, and added frustration on authors.

@DimitrisPapail @ChenhaoTan I think it is a very reasonable response to the deluge of fraudulent papers being submitted.

@roydanroy Keep Arxiv the way it is, this makes little sense. It's a repository not a gated venue, and that's good.

Steep penalties for submitting AI slop to the arXiv.

@roydanroy there's been a ton of slop on arxiv before AI. The solution was never reviewing it before upload, but ignoring it after it is uploaded.

@roydanroy Keep Arxiv the way it is, this makes little sense. It's a repository not a gated venue, and that's good.

arXiv was never high SNR. it has had slop way before LLMs and a fake P=NP proof once a month for two decades and has always been usable. Its strength was never the average correctness of papers on it, but open access to text and artifacts, and easy way to reference work. Correctness gets established downstream by people who actually use the work

@DimitrisPapail @roydanroy This is a policy question that we think about often. How low can the signal::noise ratio go before arXiv becomes unusable? Our main goal in moderation is to keep non-scientific-papers off of arXiv.

@_onionesque Why

@DimitrisPapail I don't think these two things are comparable. Requiring that an author has read what they have submitted seems categorically different.

I agree with Dan, this is an important signal that arXiv is a serious choice and should be treated as such.

I think execution success will depend on clarity for the criteria used. E.g., an entirely made-up reference vs one that has incorrect authors.

There's a lot of controversy brewing around arXiv's decision to penalize authors who post unchecked AI generated content. The impulse is correct, IMO, simply on grounds of efficiency: it is much cheaper to insist the authors vet their work first, rather than distributing the cost of that work to EVERY reader/agent who subsequently downloads the work. I believe the mechanism is likely the wrong one, however. Unfortunately, suggestions to use github are even worse, IMO, because they lose the (effective) immutability of the scientific record, which arXiv upholds.

In an ideal world, I think we would have something like a jury system with publicly posted results to develop and communicate the standard.

I agree with Dan, this is an important signal that arXiv is a serious choice and should be treated as such. I think execution success will depend on clarity for the criteria used. E.g., an entirely made-up reference vs one that has incorrect authors.

@tdietterich Don't you think that the requirement for a subsequent submission is way too strict? It's like a life-long sentence.

The penalty is a 1-year ban from arXiv followed by the requirement that subsequent arXiv submissions must first be accepted at a reputable peer-reviewed venue. 4/

Would @arxiv be interested in more thorough checks beyond this?

The penalty is a 1-year ban from arXiv followed by the requirement that subsequent arXiv submissions must first be accepted at a reputable peer-reviewed venue. 4/

IMHO, the whole notion of an immutable and sacrosanct “paper” as THE main unit of scientific work output has been outdated by at least a few decades. The arrival of AI and knowledge-work-as-a-service is only making this fact more obvious, and in practical terms untenable going forward.

I am quite convinced that, under these arxive guidelines, every single major PI in the field will be banned within a few years

@anshulkundaje Agreed. We need to change the mindset from punishing people to help people improve.

Wouldn't tagging papers that issues with incontrovertible evidence (like hallucinated refs) be a much easier solution than this weird 1 year ban with a "reputed peer review" requirement (for a preprint server?!??

If arXiv decides to do active gate checking on LLM slops, it also has the responsibility to release the stats on rejection rate and on hold delay, and explain the decision making process in more transparency.

hallucinated references will land you a 1-year ban from arxiv now. wow

Excellent 👏 Accountability is crucial for Slop

The penalty is a 1-year ban from arXiv followed by the requirement that subsequent arXiv submissions must first be accepted at a reputable peer-reviewed venue. 4/

@roydanroy It’s not on arXiv to decide which papers are good, this is what conferences are for

There's a lot of controversy brewing around arXiv's decision to penalize authors who post unchecked AI generated content. The impulse is correct, IMO, simply on grounds of efficiency: it is much cheaper to insist the authors vet their work first, rather than distributing the cost of that work to EVERY reader/agent who subsequently downloads the work. I believe the mechanism is likely the wrong one, however. Unfortunately, suggestions to use github are even worse, IMO, because they lose the (effective) immutability of the scientific record, which arXiv upholds.

arXiv now has a one-year ban for hallucinated references

@roydanroy Surprised to see comments by professors here about gatekeeping. They seem to have forgotten that you couldn't submit to arXiv directly unless you had a .edu ID. If you were outside academia, you needed an endorsement to be able to submit. This has been the case for 15 years.

There's a lot of controversy brewing around arXiv's decision to penalize authors who post unchecked AI generated content. The impulse is correct, IMO, simply on grounds of efficiency: it is much cheaper to insist the authors vet their work first, rather than distributing the cost of that work to EVERY reader/agent who subsequently downloads the work. I believe the mechanism is likely the wrong one, however. Unfortunately, suggestions to use github are even worse, IMO, because they lose the (effective) immutability of the scientific record, which arXiv upholds.

@DimitrisPapail I don't think these two things are comparable. Requiring that an author has read what they have submitted seems categorically different.

Arxiv always had tons of slop, and was fine. Arbitrary gatekeeping mechanisms will only increase the chance that it withers away.

I don't know what's going to happen to the concept of a paper (or at least its evaluation) 2-3 years from now. But the last time there was an epidemic of slop, you had vixra. No gatekeeping. But this time we might be destined for a web where everything becomes one massive viXra.

Having hallucinated references in the submitted manuscript will land you a 1-year ban from arxiv.

Examples of incontrovertible evidence: hallucinated references, meta-comments from the LLM ("here is a 200 word summary; would you like me to make any changes?"; "the data in this table is illustrative, fill it in with the real numbers from your experiments") end/

@roydanroy Also what is the definition of a hallucinated reference?

Is the latex compiler accidentally putting two NeurIPS editors as authors an hallucination?

Is adding a reference in v2 following a reviewer request an hallucination if it doesn't exist?

There's a lot of controversy brewing around arXiv's decision to penalize authors who post unchecked AI generated content. The impulse is correct, IMO, simply on grounds of efficiency: it is much cheaper to insist the authors vet their work first, rather than distributing the cost of that work to EVERY reader/agent who subsequently downloads the work. I believe the mechanism is likely the wrong one, however. Unfortunately, suggestions to use github are even worse, IMO, because they lose the (effective) immutability of the scientific record, which arXiv upholds.

seems pretty intense for a mistake that could possibly happen to someone just reformatting text before a deadline (not the hallucinated references, but LLM meta-comments.)

hallucinated references will land you a 1-year ban from arxiv now. wow

Wouldn't tagging papers that issues with incontrovertible evidence (like hallucinated refs) be a much easier solution than this weird 1 year ban with a "reputed peer review" requirement (for a preprint server?!??

Attention @arxiv authors: Our Code of Conduct states that by signing your name as an author of a paper, each author takes full responsibility for all its contents, irrespective of how the contents were generated. 1/

@TaliaRinger Is it? Then you should get a ban for misused references as well. Let's see who of us stays standing

Good tbh

I understand that arxive has issues caused by blushing submissions but this is way too strict

Mistakes could slip in in papers long before AI and a single mistake slipping in isn't a sign that a paper is unchecked slop

You can have a great paper with a line of prompt left in the supplementary. Do you deserve a lifetime ban?

The penalty is a 1-year ban from arXiv followed by the requirement that subsequent arXiv submissions must first be accepted at a reputable peer-reviewed venue. 4/

@roydanroy Not for submitting AI slops. Steep penalties first making any mistakes while using AI, even in a paper that is otherwise good

Complete nonsense

Steep penalties for submitting AI slop to the arXiv.

Fully agree

I am quite convinced that, under these arxive guidelines, every single major PI in the field will be banned within a few years

@littmath I agree with you, as stated right now it simply cannot work, or worse they will hand protect a few famous people

@LucaAmb My point is not that PIs won’t have AI-generated text in their papers. It’s that obviously the arxiv will not ban all PIs. So either the guidelines will be enforced selectively (or, more generously, flexibly), or they will change.