xAI completes training run for Grok V9 model

xAI completed an internal training run for its Grok V9 foundation model with 1.5 trillion parameters. The model is three times larger than the 0.5 trillion parameter base used in the current Grok 4.2 release. Initial results are strong ahead of supplemental training that will incorporate data from Cursor. Internal versioning marks Grok V9 as a distinct step from version 8 with major differences in scale and training approach.

We are soon going to get a new 1.5T model by xAI

"The difference between (the current) Grok foundation model 8 and 9 is gigantic." ~ Elon Musk

Grok V9 is a 3x larger foundation model built to compete with top coding agents.

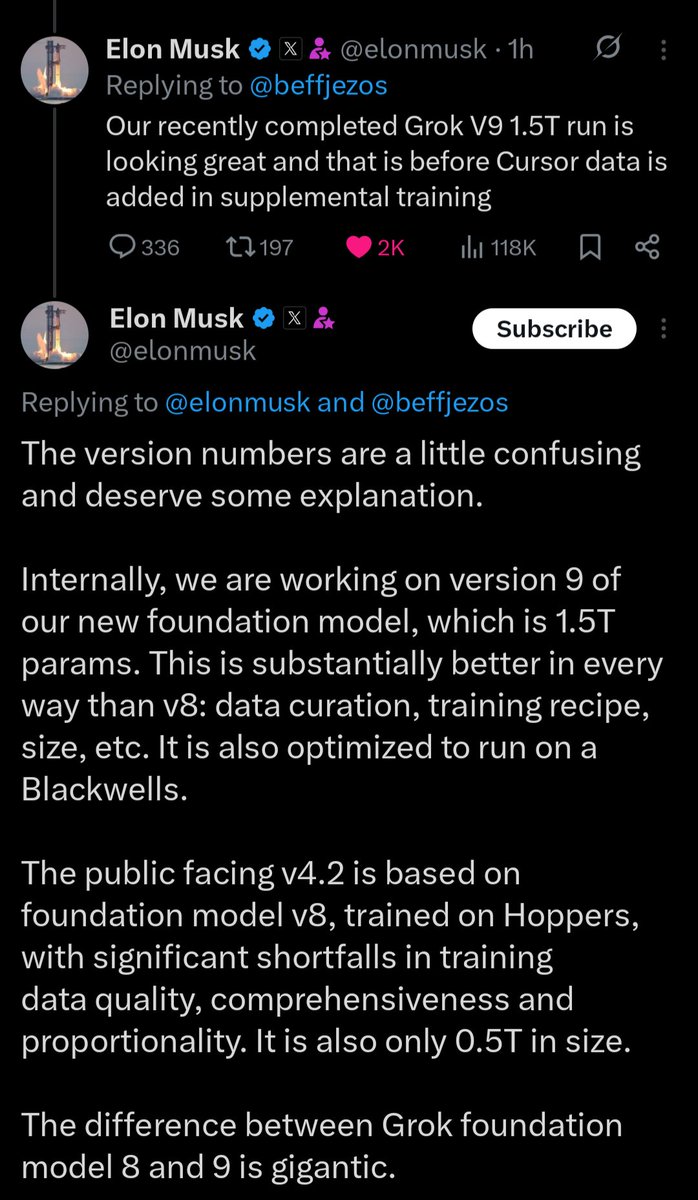

The version numbers are a little confusing and deserve some explanation. Internally, we are working on version 9 of our new foundation model, which is 1.5T params. This is substantially better in every way than v8: data curation, training recipe, size, etc. It is also optimized to run on a Blackwells. The public facing v4.2 is based on foundation model v8, trained on Hoppers, with significant shortfalls in training data quality, comprehensiveness and proportionality. It is also only 0.5T in size. The difference between Grok foundation model 8 and 9 is gigantic.

According to Elon, Grok 4.2 is based on foundation model v8: 0.5T parameters, trained on Hoppers, with major data-quality shortcomings.

The new v9 model is 1.5T parameters, trained with a better recipe, better data curation, and optimized for Blackwell.

Better model with heat up competition even nore

Called it, they are gonna use Cursor’s data to leapfrog

@beffjezos Our recently completed Grok V9 1.5T run is looking great and that is before Cursor data is added in supplemental training

@theo Think they’ll actually leapfrog?

Called it, they are gonna use Cursor’s data to leapfrog