Researchers introduce QuantSightBench for LLM forecasting evaluation

Jeremy Qin and Maksym Andriushchenko introduced QuantSightBench to measure how accurately frontier LLMs produce numerical forecasts and calibrated prediction intervals. The benchmark scores models on open-ended questions from business and politics using coverage rate, mean log interval score, and mean absolute percentage error. Supporting resources include an arXiv paper, the aisa-group/quantsightbench GitHub repository with evaluation code, and a live leaderboard at quantsightbench.com.

@maksym_andr another tübingen banger

💥New paper: LLMs are now used for high-stakes real-world decisions, but can their numerical predictions and uncertainty estimates be trusted? We built QuantSightBench, a benchmark to measure how well frontier models forecast numerical outcomes across business, politics, etc. Why forecasting? Forecasting of world events is a great testbed for general LLM decision-making. The real world produces so many things that can be forecast, and the objective ground truth eventually gets revealed. This is the ultimate benchmark: you want to predict how the real world will unfold. Beyond producing accurate point-wise forecasts, having correct uncertainty estimation is essential. LLMs typically don't produce consequential forecasts autonomously, but they rather assist human decision making. This requires calibrated uncertainty estimation, which is also a necessary skill for *agentic* LLM forecasting: the agent needs to know when to acquire more information and when to stop and commit to an answer. Why *numerical* forecasting? Nearly all prior LLM forecasting work evaluates on binary Polymarket-style questions (which is great, btw). However, most decisions that actually matter: GDP growth, ARR numbers, election margins, infrastructure timelines are not binary. They're numbers, and the confidence intervals there matter even more than the point estimates. So we built a benchmark to measure this! This is joint work with Jeremy Qin @Jjq2221.

💥New paper: LLMs are now used for high-stakes real-world decisions, but can their numerical predictions and uncertainty estimates be trusted?

We built QuantSightBench, a benchmark to measure how well frontier models forecast numerical outcomes across business, politics, etc.

Why forecasting?

Forecasting of world events is a great testbed for general LLM decision-making. The real world produces so many things that can be forecast, and the objective ground truth eventually gets revealed. This is the ultimate benchmark: you want to predict how the real world will unfold.

Beyond producing accurate point-wise forecasts, having correct uncertainty estimation is essential. LLMs typically don't produce consequential forecasts autonomously, but they rather assist human decision making. This requires calibrated uncertainty estimation, which is also a necessary skill for *agentic* LLM forecasting: the agent needs to know when to acquire more information and when to stop and commit to an answer.

Why *numerical* forecasting?

Nearly all prior LLM forecasting work evaluates on binary Polymarket-style questions (which is great, btw). However, most decisions that actually matter: GDP growth, ARR numbers, election margins, infrastructure timelines are not binary. They're numbers, and the confidence intervals there matter even more than the point estimates.

So we built a benchmark to measure this! This is joint work with Jeremy Qin @Jjq2221.

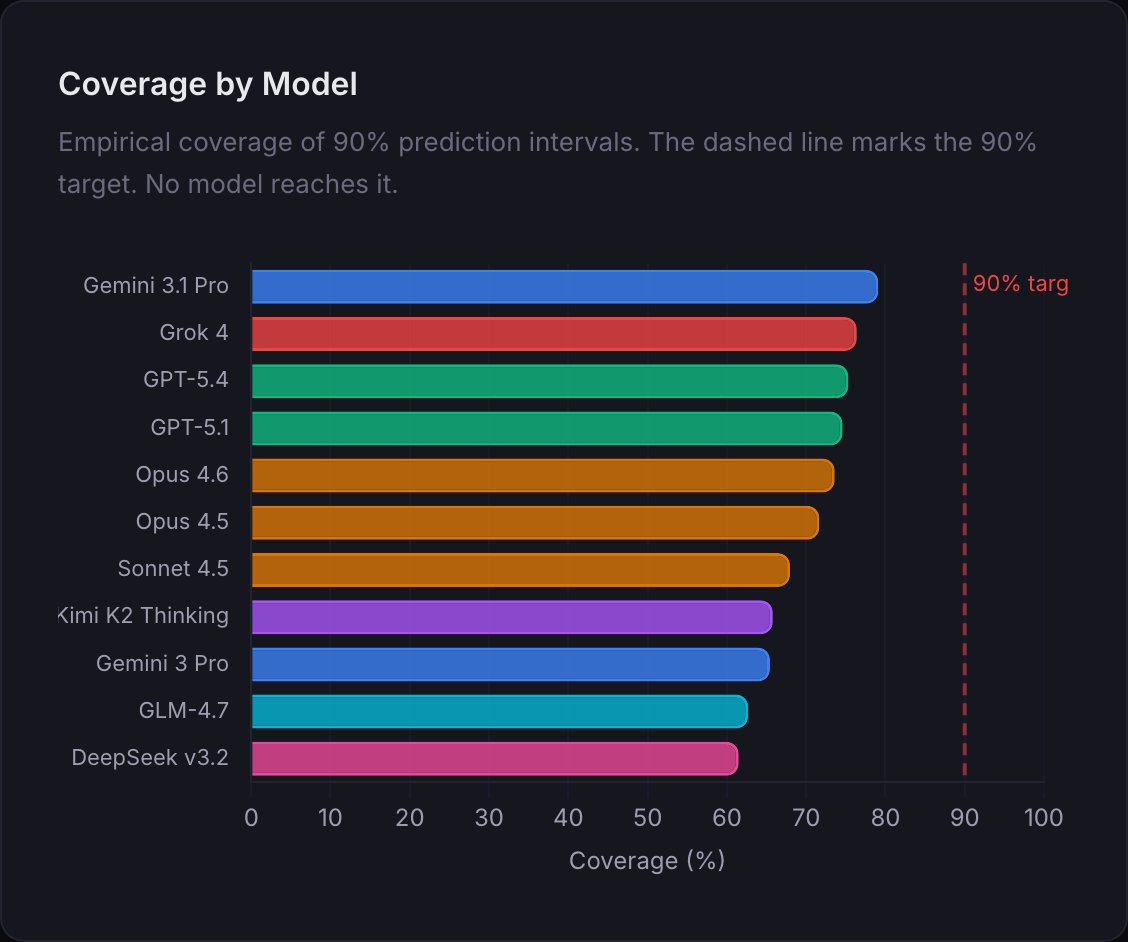

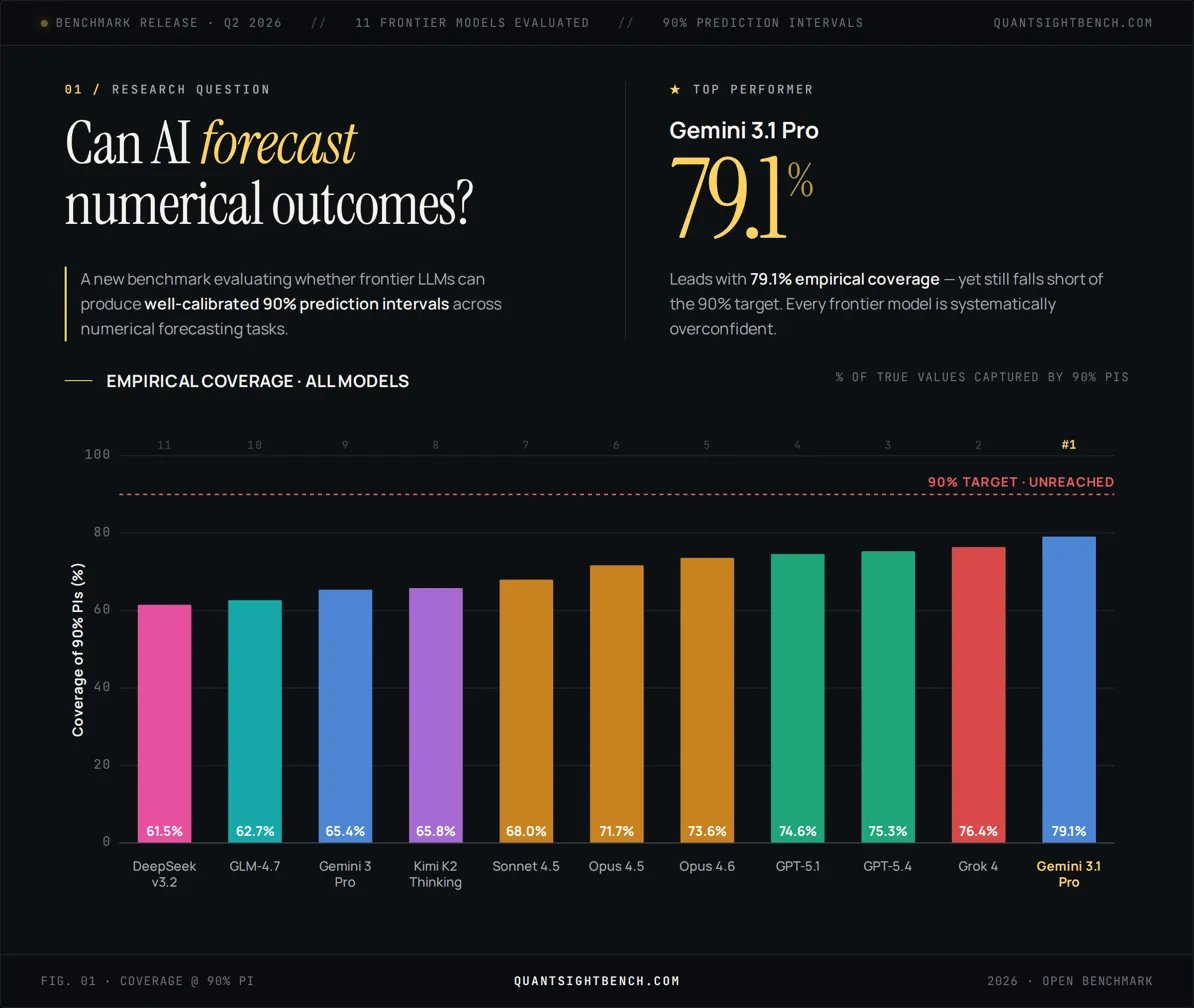

Main results: frontier models are systematically miscalibrated on their prediction intervals. They undercover at every nominal level we tested.

We test frontier models in an agentic setting on QuantSightBench, and control for temporal leakage, resolution ambiguity, and the other pitfalls flagged by @dpaleka et al. by building on the OpenForecast pipeline from @nikhilchandak29, @ShashwatGoel7 et al. (https://arxiv.org/abs/2512.25070).

💥New paper: LLMs are now used for high-stakes real-world decisions, but can their numerical predictions and uncertainty estimates be trusted? We built QuantSightBench, a benchmark to measure how well frontier models forecast numerical outcomes across business, politics, etc. Why forecasting? Forecasting of world events is a great testbed for general LLM decision-making. The real world produces so many things that can be forecast, and the objective ground truth eventually gets revealed. This is the ultimate benchmark: you want to predict how the real world will unfold. Beyond producing accurate point-wise forecasts, having correct uncertainty estimation is essential. LLMs typically don't produce consequential forecasts autonomously, but they rather assist human decision making. This requires calibrated uncertainty estimation, which is also a necessary skill for *agentic* LLM forecasting: the agent needs to know when to acquire more information and when to stop and commit to an answer. Why *numerical* forecasting? Nearly all prior LLM forecasting work evaluates on binary Polymarket-style questions (which is great, btw). However, most decisions that actually matter: GDP growth, ARR numbers, election margins, infrastructure timelines are not binary. They're numbers, and the confidence intervals there matter even more than the point estimates. So we built a benchmark to measure this! This is joint work with Jeremy Qin @Jjq2221.

Model-level takeaways: - Grok 4 is genuinely good at forecasting — beats GPT-5.4 👀 - But Gemini 3.1 Pro is still on top overall - Open-weight models lag meaningfully behind the frontier on interval calibration: the best proprietary model is Gemini 3.1 Pro (79.1% coverage at the 90% PI), while the best open-weight model is Kimi K2 Thinking (65.8%).

Main results: frontier models are systematically miscalibrated on their prediction intervals. They undercover at every nominal level we tested. We test frontier models in an agentic setting on QuantSightBench, and control for temporal leakage, resolution ambiguity, and the other pitfalls flagged by @dpaleka et al. by building on the OpenForecast pipeline from @nikhilchandak29, @ShashwatGoel7 et al. (https://arxiv.org/abs/2512.25070).

Website: https://quantsightbench.com/ Paper: https://arxiv.org/abs/2604.15859 Code: https://github.com/aisa-group/quantsightbench

Almost everything you see is done by my amazing student @Jjq2221! That's the first paper of his PhD, and we are actively preparing some other cool projects :) stay tuned!

Model-level takeaways: - Grok 4 is genuinely good at forecasting — beats GPT-5.4 👀 - But Gemini 3.1 Pro is still on top overall - Open-weight models lag meaningfully behind the frontier on interval calibration: the best proprietary model is Gemini 3.1 Pro (79.1% coverage at the 90% PI), while the best open-weight model is Kimi K2 Thinking (65.8%).