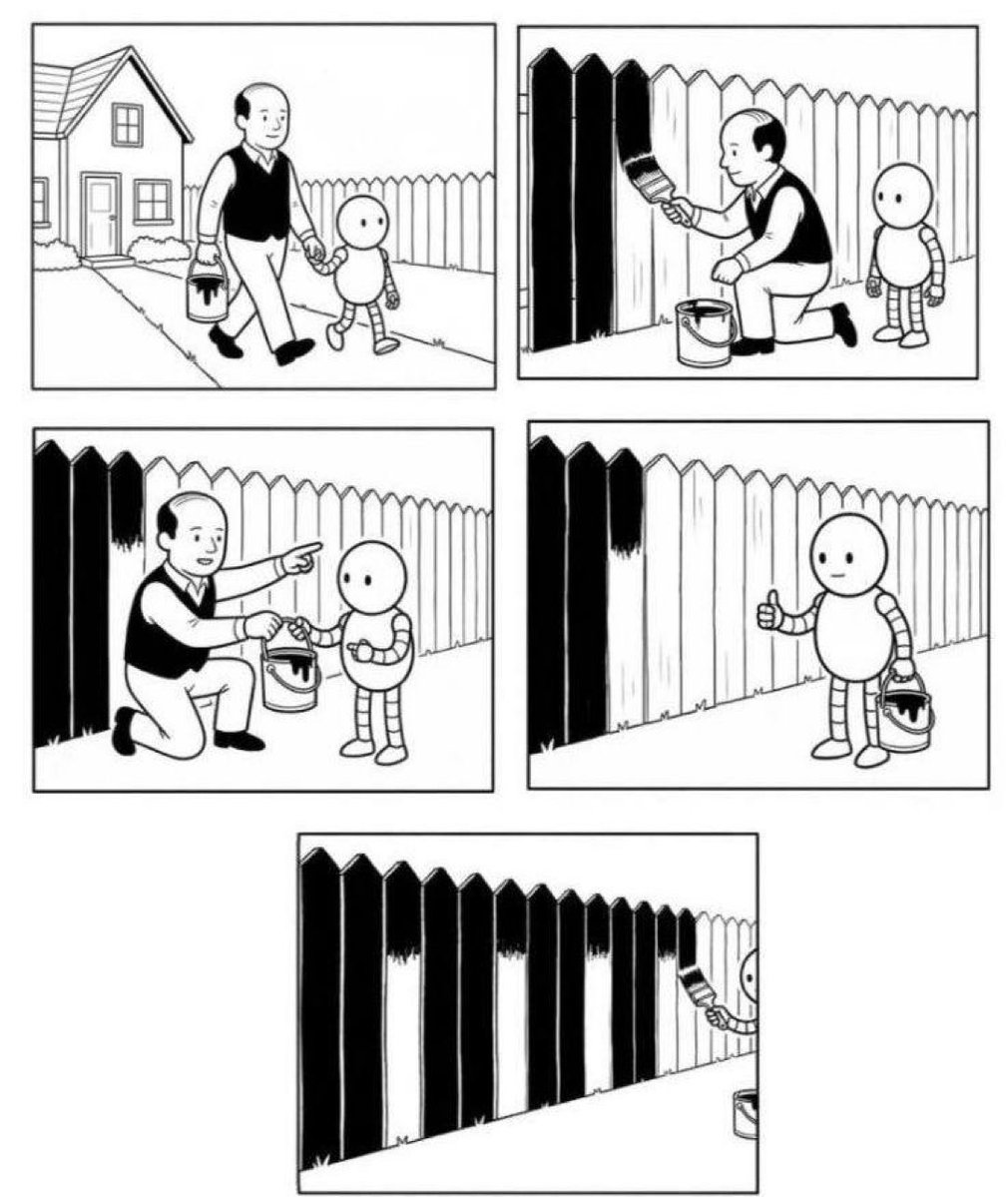

Comic illustrates in-context learning in language models

The comic attached to arxiv.org/pdf/2301.13379 shows a five-panel sequence of a bald man teaching a smaller round-headed figure to paint a fence by demonstrating the process, handing over supplies, and leaving the figure to complete alternating black-and-white panels alone. Rishabh Agarwal notes that GPT-3 established language models as few-shot in-context learners while later work optimizes for specific high-value tasks.

In-context learning in LLMs

There is a nice sequence of papers that tell this story of why. Will write more about this when I have the time, but tldr is 1) faithfulness is hard to satisfy with next-token objective, 2) high statistical regularity of code makes codegen models highly reliable. The combination of these two broad stroke ideas resulted in proliferation of methods like this (for e.g.) https://arxiv.org/pdf/2301.13379

GPT-3 was a sensation because it claimed language models are few-shot in-context learners. I wonder why we dropped the ball on in-context learning, and moved to mostly execution oriented research on LLMs: training on any task of high value, a set that will keep increasing with no end in sight. Maybe we'll get these data centers with geniuses, but they are *only* geniuses on tasks that your favourite frontier lab decides to directly / indirectly optimize for.

GPT-3 was a sensation because it claimed language models are few-shot in-context learners.

I wonder why we dropped the ball on in-context learning, and moved to mostly execution oriented research on LLMs: training on any task of high value, a set that will keep increasing with no end in sight.

Maybe we'll get these data centers with geniuses, but they are *only* geniuses on tasks that your favourite frontier lab decides to directly / indirectly optimize for.

In-context learning in LLMs